Article updated in March 2026 for the PMBOK® Guide — Eighth Edition.

Monitor and Control Project Performance in PMBOK 8 — Complete Guide

Formerly known as: Monitor and Control Project Work (PMBOK 6)

The dashboard showed green on every indicator. Schedule performance index: 1.02. Cost performance index: 0.98. Stakeholder satisfaction: favorable. The project manager was confident: the project was on track. Then the sponsor called. Three critical deliverables that had been marked “complete” in the PMIS were not actually complete — they had been moved to “complete” status prematurely to meet a reporting deadline. The earned value data was accurate only on paper. The real project was seven weeks behind schedule, and the work performance reports had been presenting a fiction for two months. The problem was not that the project lacked a monitoring process — it had dashboards, status reports, and weekly meetings. The problem was that the monitoring process was measuring reported data rather than verified actuals, and no one had evaluated whether the reported data was trustworthy.

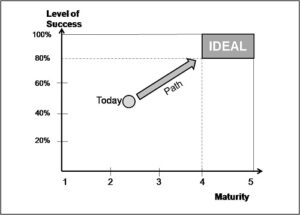

In PMBOK 8, Monitor and Control Project Performance is Process 7 of the Governance Domain. It is the process that tracks, reviews, and reports overall project progress to meet the performance objectives defined in the project management plan — providing stakeholders with a trustworthy, evidence-based view of project status, including cost and schedule forecasts. Without it, execution proceeds without accountability. With it, deviations from plan are visible early, when corrective action is still possible at manageable cost.

This complete guide covers everything a project manager or PMP candidate needs to understand, apply, and tailor this process:

- What it is — definition, position in PMBOK 8, and measurement pitfalls to avoid

- Why use it — direct benefits and the cost of skipping it

- Full ITTO — every input, tool, technique, and output explained

- Step-by-step application guide — from data collection to performance reporting

- When to apply it — triggers and mandatory vs. recommended scenarios

- Two real-world examples — Project Phoenix (website launch) and Project ProjectAdm (SaaS PM platform)

- Templates and tools — with free downloads

- Five common errors — and how to avoid each one

- Tailoring — predictive, agile, and hybrid approaches

- Process interactions — what feeds into monitoring and what depends on it

- Quick-application checklist — 10 items you can use today

1. What Is the Monitor and Control Project Performance Process

Monitor and Control Project Performance is the process of tracking, reviewing, and reporting overall project progress to meet the performance objectives defined in the project management plan. According to PMBOK 8, the key benefits of this process are that it allows stakeholders to understand the current state of the project, recognize actions taken to address any performance issues, and gain visibility into the future project status with cost and schedule forecasts.

In PMBOK 8, this is Process 7 of the Governance Domain (Process 7 of 9). It is the primary feedback loop of the project — the mechanism that compares actual performance to planned performance, identifies variances, assesses their significance, and triggers corrective or preventive action when required.

PMBOK 8 distinguishes two complementary activities within this process:

- Monitoring: Collecting, measuring, and assessing data and trends to drive better project outcomes, maintain project health, and identify areas needing special attention. Monitoring is continuous and produces the data on which all control decisions are based.

- Controlling: Determining corrective or preventive actions, replanning, and following up on action plans to ensure that performance issues are resolved. Controlling is responsive — it activates when monitoring identifies a significant variance from the plan.

What Monitor and Control Project Performance tracks

According to PMBOK 8, this process involves:

- Evaluating performance compared to plan

- Tracking the utilization of resources, work completed, and budget expended

- Demonstrating accountability to stakeholders

- Providing information to stakeholders about project status

- Assessing whether project deliverables are on track to deliver planned benefits

- Conducting conversations about trade-offs, threats, opportunities, and options

- Evaluating current risks, identifying new ones, and monitoring risk responses

- Ensuring project deliverables will meet the customer acceptance criteria

- Updating the sourcing strategy to better meet project goals and constraints

Measurement pitfalls: what PMBOK 8 warns about

PMBOK 8 dedicates explicit attention to measurement pitfalls — risks in the measurement process itself that can undermine the integrity of monitoring. These are critical for PMP candidates and practitioners to understand:

| Pitfall | Description | How to mitigate |

|---|---|---|

| Hawthorne effect | The act of measuring something influences behavior. Measuring only deliverable volume encourages quantity over quality. | Design metrics that measure the outcomes you actually want, not proxies that can be gamed without delivering value |

| Vanity metric | A metric that shows data but does not provide useful information for decisions. Page views vs. engaged users is the classic example. | For each metric, ask: “What decision would I make differently based on this data?” If the answer is “none,” the metric is vanity. |

| Demoralization | Measures and goals set that are not achievable cause team morale to fall as the team continuously fails to meet targets. | Set challenging but achievable targets. Distinguish aspirational metrics from operational ones. Recognize effort as well as outcomes. |

| Misusing metrics | Focusing on less important metrics; performing well on short-term measures at the expense of long-term ones; working on easy tasks to improve indicators. | Define a metrics hierarchy: which metrics matter most? Tie performance recognition to the metrics that align with the project’s actual objectives. |

| Confirmation bias | Looking for and seeing information that supports preexisting views. Status reports that consistently present favorable interpretations of ambiguous data. | Require structured variance analysis for all metrics. Ask: “What does this data tell us if the project is in trouble?” before concluding it is not. |

| Correlation vs. causation | Confusing correlation of two variables with causation. Behind-schedule projects are not necessarily over budget because of schedule issues — both may have a common root cause. | Conduct root cause analysis before assuming causal relationships between performance variables. Identify and address the underlying cause, not the surface correlation. |

What changed from PMBOK 6 to PMBOK 8

| Aspect | PMBOK 6 — Monitor and Control Project Work | PMBOK 8 — Monitor and Control Project Performance |

|---|---|---|

| Process name | Monitor and Control Project Work | Monitor and Control Project Performance |

| Structural location | Monitoring and Controlling Process Group — Integration Management | Governance Domain, Process 7 of 9 |

| Measurement focus | Work tracking and performance reporting | Same, with explicit addition of measurement pitfall guidance and SMART metric criteria |

| Value assessment | Performance vs. plan | Explicitly includes evaluating whether decisions might enhance the project’s value proposition — not just whether work is on plan |

| Forecasting | Cost and schedule forecasts as outputs | Same, with stronger emphasis on stakeholder-facing forecast visibility |

2. Why Use the Monitor and Control Project Performance Process

Direct benefits

- Early detection of deviations: Performance monitoring identifies variances from the plan before they compound into crises. A 5% cost overrun detected in week 4 requires a minor corrective action. The same variance detected in week 12 may require a formal scope reduction, a budget request to the sponsor, or a stakeholder renegotiation.

- Evidence-based decision-making: Monitoring provides the PM and sponsor with the objective data required to make sound project governance decisions: whether to accelerate resources, whether to invoke a risk response, whether to submit a change request, or whether to escalate to the steering committee.

- Stakeholder transparency: Work performance reports produced by this process provide stakeholders with a structured, periodic, evidence-based view of project status. This transparency builds stakeholder confidence and reduces the informal status inquiries that consume PM time when stakeholders feel they are not receiving reliable information.

- Value protection: PMBOK 8 explicitly extends monitoring beyond plan compliance to include evaluating whether decisions might enhance the project’s value proposition. This framing positions monitoring not just as a control activity but as a governance activity that protects and enhances the project’s business case.

- Cost and schedule forecast accuracy: Earned value analysis and trend analysis produce forward-looking forecasts (EAC, ETC, schedule forecast at completion) that allow the PM to predict performance problems before they occur rather than report them after they have.

The cost of skipping or under-investing in monitoring

- Performance drift is invisible: Without systematic monitoring, the gap between plan and reality grows silently. The project feels like it is on track because no one is measuring the gap.

- Corrective actions are reactive: In the absence of monitoring data, the PM responds to problems after they have become visible to stakeholders — at which point they are significantly more expensive to resolve than if they had been detected early.

- Stakeholder trust erodes: Stakeholders who receive inconsistent, subjective, or insufficiently detailed status information develop a low-level anxiety about the project that expresses itself at the worst possible time — typically at acceptance review or contract renewal.

3. Inputs, Tools & Techniques, and Outputs (ITTO)

| Inputs | Tools & Techniques | Outputs |

|---|---|---|

|

|

|

Inputs explained

Work performance information: The primary raw material for monitoring — the processed and analyzed form of work performance data collected during Manage Project Execution. Work performance data (raw observations) becomes work performance information when it has been analyzed against the project plan: actual vs. planned completion percentages, actual vs. planned costs, actual vs. planned schedule dates. Work performance information is the input to monitoring; work performance data is the input to information. The distinction matters: monitoring that receives only raw data with no analysis has to perform the analysis itself; monitoring that receives pre-analyzed information can focus on interpretation and decision-making.

Project management plan (any component): The planned baselines against which performance is measured. The schedule baseline, cost baseline, and scope baseline are the primary reference points for variance calculation. The quality management plan, risk management plan, and communications management plan define additional performance dimensions that monitoring tracks.

Project documents — assumption log, basis of estimates, cost forecasts, issue log, lessons learned register, milestone list, quality reports, risk register, risk report, schedule forecasts: Together, these documents provide the context within which performance data is interpreted. The assumption log reveals assumptions that may have been validated or invalidated since the last reporting period, potentially explaining performance variances. The basis of estimates enables variance analysis — if actual costs significantly exceed estimates, the basis of estimates helps identify whether the overrun reflects estimation error or execution inefficiency. The risk register identifies which risks are materializing, contributing to the understanding of why performance is deviating from plan.

Tools & Techniques explained

Earned value analysis (EVA): The most rigorous quantitative performance measurement methodology in project management. EVA integrates scope, schedule, and cost performance into a unified, objective performance view. Key metrics: Planned Value (PV) — the authorized budget for work planned to be done; Earned Value (EV) — the authorized budget for work actually done; Actual Cost (AC) — what was actually spent. Derived metrics: Cost Performance Index (CPI = EV/AC) — a CPI below 1.0 means the project is over budget per unit of work accomplished; Schedule Performance Index (SPI = EV/PV) — an SPI below 1.0 means the project is behind schedule per unit of work planned. Forecast metrics: Estimate at Completion (EAC) predicts the total project cost at completion based on current performance trends.

Variance analysis: The systematic comparison of planned performance to actual performance across all performance dimensions (schedule, cost, scope, quality, risk). Variance analysis identifies not just that a variance exists but how significant it is relative to defined thresholds: a variance within tolerance requires monitoring; a variance outside tolerance requires a corrective action or change request.

Trend analysis: Examining performance data over time to identify whether performance is improving, deteriorating, or stable. A single data point tells you where you are; trend analysis tells you where you are going. An SPI of 0.92 is concerning; an SPI trend of 0.97, 0.95, 0.93, 0.92, 0.91 is an alert that requires immediate attention, because the trend predicts continued deterioration.

Root cause analysis: When significant variances are identified, root cause analysis determines the underlying cause rather than the surface symptom. A schedule variance may be attributed to “insufficient resources” at the surface level. Root cause analysis may reveal that the actual cause is a specific interface between two workstreams that has not been coordinated. Corrective actions that address root causes resolve variances; corrective actions that address surface symptoms create recurring variances.

Project dashboards, visual controls, and information radiators: Visual representations of performance data that make project status immediately comprehensible to the PM, team, and stakeholders. Project dashboards consolidate multiple performance metrics into a single visual view. Information radiators (physical or digital) make performance visible to everyone in the project environment without requiring a report request — the data is always visible. Visual controls (sprint burndown charts, cumulative flow diagrams, risk heatmaps) translate complex performance data into intuitive formats that support rapid assessment and decision-making.

Outputs explained

Work performance reports: The primary stakeholder-facing output of this process — a structured document (or dashboard) that presents the current project performance status to the appropriate audience. A work performance report at the steering committee level may present EAC, SPI, CPI, milestone completion status, top risks, and key decisions required. A team-level performance report may focus on sprint velocity, defect rates, and definition of done compliance. The content, format, and distribution frequency of work performance reports should be defined in the communications management plan and tailored to each stakeholder audience’s needs.

Change requests: Monitoring frequently identifies conditions that require formal changes to the project baseline. A cost variance that exceeds the defined threshold triggers a change request for additional budget. A schedule variance that cannot be recovered within the existing plan triggers a change request for schedule extension or scope reduction. Monitoring-driven change requests are submitted to the Assess and Implement Changes process for evaluation and decision.

4. How to Apply the Process Step by Step

Step 1 — Define performance metrics and thresholds in the quality management plan

Before execution begins, define which metrics will be monitored, how they will be measured, what thresholds constitute acceptable performance, and what actions will be triggered when thresholds are breached. Metrics must meet SMART criteria: Specific, Measurable, Achievable, Relevant, and Time-bound. Generic metrics like “project health” provide no monitoring value. Specific metrics like “CPI ≥ 0.90 throughout execution; SPI ≥ 0.85; defect introduction rate ≤ 3 per sprint” provide clear action triggers.

Step 2 — Collect work performance information at defined intervals

At each reporting interval (weekly, bi-weekly, or sprint boundary), collect work performance information from the PMIS: actual costs incurred, actual schedule progress, work completed vs. planned, quality inspection results, risk status updates. Verify the accuracy of reported data — do not assume that status updates in the PMIS reflect actual completion. Spot-check high-risk work packages.

Step 3 — Analyze performance data using EVA and variance analysis

Calculate earned value metrics (EV, PV, AC, CPI, SPI, EAC, ETC) at each reporting interval. Conduct variance analysis: which activities are behind schedule? Which are over budget? Which deliverables have quality issues? Conduct trend analysis: are variances improving or deteriorating? Apply root cause analysis to variances outside defined thresholds before recommending corrective action.

Step 4 — Produce work performance reports

Generate work performance reports tailored to each stakeholder audience. Steering committee reports focus on strategic metrics, forecasts, and decisions required. Team reports focus on operational metrics and blockers. All reports present facts first, then analysis, then recommended actions. Reports that mix facts with opinions without labeling the distinction undermine the integrity of the monitoring process.

Step 5 — Submit change requests for variances outside tolerance

When monitoring identifies variances that cannot be resolved within the existing authorized plan, generate formal change requests. Every change request should include the current performance metric, the variance from baseline, the root cause analysis, the proposed corrective action, and the estimated impact on project scope, schedule, cost, and risk. Change requests are submitted to the Assess and Implement Changes process.

5. When to Apply the Process

Mandatory scenarios

- Throughout project execution: Monitoring is continuous. There is no phase of project execution where performance does not need to be tracked.

- At each stakeholder reporting interval: Work performance reports must be produced at the frequency defined in the communications management plan, without exception.

- At phase gates: Phase gate reviews require a comprehensive performance assessment that covers all performance dimensions — schedule, cost, scope, quality, and risk — as the basis for the phase authorization decision.

Recommended scenarios

- When performance trends show early deterioration: Do not wait for a threshold to be breached. If trend analysis shows SPI declining for three consecutive reporting periods but still above the threshold, initiate root cause analysis and corrective planning before the threshold is breached.

- When significant risks materialize: A risk event that materializes during execution requires an immediate performance impact assessment, not a wait until the next scheduled reporting interval.

6. Practical Examples

Example 1 — Website Launch: Project Phoenix

Context: Alex Morgan, PMP, is managing Project Phoenix — a 90-day website redesign for TechCorp, led by CEO Sarah Chen. Budget: $72,250. 2-week sprints.

How Monitor and Control Project Performance was applied:

Alex defined four primary performance metrics at planning: sprint velocity vs. planned velocity (target: ≥ 85% of planned story points per sprint), budget utilization vs. planned (target: CPI ≥ 0.92), defect introduction rate (target: ≤ 3 per sprint), and milestone adherence (target: 100% of milestones met within 3 business days of planned date).

At Sprint 2 review, the sprint velocity metric triggered an alert: actual velocity was 68% of planned. The work performance report presented to Sarah Chen showed the metric, the variance, the trend (one sprint below threshold), and the root cause analysis (developer resource split between projects). The report included a specific change request recommendation. The change request was approved within three business days, the resource allocation was restored, and Sprint 3 velocity returned to 94% of planned. The correction was made at week 4, when the cumulative schedule impact was one sprint. Without formal monitoring, the deviation would likely have continued for several more sprints before becoming visible, at which point the project would have been 5–6 weeks behind plan rather than one sprint.

At project close, Alex produced a final performance report comparing planned vs. actual for all four metrics across all nine sprints. The report became part of the project archive and was referenced at the agency’s quarterly performance review as an example of effective early-detection monitoring.

Example 2 — SaaS PM Platform: Project ProjectAdm

Context: Eduardo Montes leads ProjectAdm development. Team: 8 developers + 2 designers + 1 QA. Duration: 18 months. Hybrid approach.

How Monitor and Control Project Performance was applied:

ProjectAdm’s hybrid approach required a hybrid monitoring framework. On the predictive track, Eduardo used earned value analysis against the compliance and infrastructure milestone plan: five major milestones over 18 months, each with a defined planned value, and earned value calculated at each milestone completion. On the agile track, Eduardo used velocity and burndown charts, defect rates, and definition of done compliance metrics to monitor sprint-level performance.

The monthly performance report for co-sponsor Henry Douglas presented a unified view: EVM metrics for the predictive milestones, agile velocity and quality metrics for the sprint track, a risk dashboard showing the top five active risks and their current status, and a financial summary comparing budget utilization to planned expenditure. Every report included a section labeled “decisions required” — specific topics that required co-sponsor input before the next month’s reporting interval.

At month 8, the earned value analysis produced an EAC forecast that was 4.3% above the approved budget, driven by the AWS multi-region architecture’s higher-than-estimated ongoing infrastructure costs. Eduardo presented the variance at the monthly review with three scenarios: (1) accept the overrun and adjust the budget; (2) optimize the infrastructure configuration to reduce costs; (3) defer two non-critical features to release 1.1. The co-sponsors selected option 2, Eduardo generated a change request for the infrastructure optimization work, and the corrected EAC returned to within 1.2% of budget by month 10. The entire correction cycle — from variance detection to corrected trajectory — took 7 weeks. Without earned value analysis, the variance would not have been visible until actual expenditures at month 12 exceeded the budget line.

7. Free and Recommended Templates

| Document | Free blank template |

|---|---|

| Status Report — Software Development Sprint velocity, defect rates, milestone status, budget utilization, risks, decisions required |

Download free template |

| Earned Value Analysis Dashboard PV, EV, AC, CPI, SPI, EAC, ETC — calculated and trended over reporting periods |

Download free template |

Recommended digital tools

- Power BI / Tableau / Google Looker Studio: For building integrated project performance dashboards that automatically pull data from the PMIS and present EVA metrics, velocity trends, and risk status in a unified visual.

- Jira / Azure DevOps: For agile-track monitoring — sprint burndown charts, velocity history, defect tracking, and definition of done compliance metrics.

- Microsoft Project / Primavera: For predictive-track EVM — planned value curves, earned value calculations, and schedule performance analysis.

- Confluence / Notion: For structured work performance reports, distributed to stakeholders at defined intervals with version history and comment tracking.

8. Five Common Errors — and How to Avoid Each One

Error 1 — Reporting status from PMIS data without verifying accuracy

Why it happens: The PM trusts that task status updates in the PMIS reflect actual completion. In practice, team members update task status based on schedule pressure (“the reporting deadline is tomorrow and I need to show progress”) rather than actual completion status.

How to avoid it: Establish a practice of spot-checking reported completion status for high-risk work packages against the actual deliverable or evidence of completion. For software development, “complete” should require a passing automated test suite or a peer review sign-off, not a checkbox update. Define “complete” in the definition of done before execution begins — not after the first monitoring report has already been produced.

Error 2 — Using vanity metrics as primary performance indicators

Why it happens: Vanity metrics are easy to collect, easy to report, and consistently favorable, which makes them attractive to PMs who are under pressure to show positive status. Number of meetings held, number of status reports produced, and number of tasks started (vs. completed) are all vanity metrics that look like monitoring data without providing monitoring value.

How to avoid it: For each metric in the monitoring framework, apply the decision utility test: “What decision would I make differently based on this data?” If the answer is “none,” the metric is measuring activity, not performance. Replace it with a metric that drives actionable insight.

Error 3 — Treating monitoring as a reporting activity rather than a control activity

Why it happens: Monitoring is perceived as something done “for the sponsor” rather than for the PM’s own decision-making. Status reports are produced on schedule; variances are documented; nothing changes. The monitoring process is active; the controlling process is absent.

How to avoid it: Define explicit control thresholds for every monitored metric. When a threshold is breached, a specific response is mandatory: issue a change request, escalate to the sponsor, convene a corrective action meeting, or adjust resource allocation. Monitoring without defined control thresholds and response protocols is record-keeping, not governance.

Error 4 — Monitoring only financial metrics

Why it happens: Financial metrics (budget utilization, CPI) are the most visible and most easily quantifiable. Scope, quality, and risk performance are harder to measure and often receive less rigorous monitoring attention.

How to avoid it: Define a balanced monitoring framework that covers all performance dimensions: schedule (SPI, milestone adherence), cost (CPI, EAC), scope (requirements completion rate, scope change frequency), quality (defect rate, definition of done compliance), and risk (risk trigger monitoring, risk response effectiveness). A project that is on budget but delivering defective outputs or drifting scope is not on track.

Error 5 — Confusing correlation with causation in variance analysis

Why it happens: When a project is both behind schedule and over budget, it is tempting to conclude that the schedule delay caused the budget overrun. This conclusion may be wrong — both variances may have a common root cause (inadequate planning estimates, a resource conflict, a technical complexity that was underestimated) that would not be addressed by focusing corrective action on the schedule variance alone.

How to avoid it: Apply structured root cause analysis to every significant variance. Use cause-and-effect (fishbone) diagrams to surface the multiple potential root causes of a variance before committing to a corrective action. The corrective action should address the root cause, not the correlated symptom.

9. Tailoring: Predictive, Agile, and Hybrid

Predictive approach

- EVM as the primary framework: Earned value analysis provides the quantitative performance backbone for predictive project monitoring. PV, EV, AC, CPI, SPI, EAC, and ETC are calculated at each reporting interval and trended over time.

- Formal work performance reports: Structured reports produced at defined intervals (typically weekly or bi-weekly), distributed to the sponsor and steering committee, and archived as project governance documents.

- Defined variance thresholds: Formal thresholds (e.g., CPI < 0.90 or SPI < 0.85 triggers a formal corrective action and sponsor notification) documented in the project management plan.

Agile approach

- Velocity and burndown: Sprint velocity (story points completed per sprint) and sprint burndown charts are the primary performance monitoring instruments. Cumulative flow diagrams provide a visual representation of work in progress, completed, and blocked.

- Sprint reviews as stakeholder reporting: Sprint reviews serve as the primary stakeholder visibility mechanism — they demonstrate actual working increments rather than progress percentages, providing more meaningful evidence of project health.

- Continuous monitoring: In agile contexts, monitoring is daily (via the PMIS and daily stand-ups) rather than at formal reporting intervals.

Hybrid approach (ProjectAdm model)

- Dual monitoring framework: EVM for the predictive milestones; velocity and quality metrics for the agile sprints. Both sets of metrics presented in a unified monthly performance report.

- Tiered reporting: Daily PMIS data for the team; weekly sprint metrics for the PM; monthly integrated performance report for the co-sponsors.

10. Interactions with Other Processes and Domains

| Process | Domain | Relationship |

|---|---|---|

| Manage Project Execution (Process 4) | Governance | Execution generates work performance data; monitoring converts it into work performance information and reports |

| Assess and Implement Changes (Process 8) | Governance | Monitoring identifies variances that require change requests; Assess and Implement Changes processes and decides on those requests |

| Manage Project Knowledge (Process 6) | Governance | Performance monitoring data and variance analysis insights feed the lessons learned register |

| Manage Quality Assurance (Process 5) | Governance | Quality reports produced by quality assurance are inputs to performance monitoring; quality metrics are monitored dimensions |

| Risk Management processes | Risk | Performance monitoring tracks risk trigger indicators; new risks identified during monitoring are escalated to the risk register |

11. Quick-Application Checklist

- Are specific, measurable performance metrics defined for all key performance dimensions (schedule, cost, scope, quality, risk)?

- Are variance thresholds defined for each metric, with documented response protocols when thresholds are breached?

- Is work performance information being collected at defined intervals and verified for accuracy before analysis?

- Is earned value analysis (or equivalent agile velocity analysis) being applied at each reporting interval?

- Is trend analysis being performed — are metrics being tracked over time, not only at individual reporting points?

- Are work performance reports produced at the frequency defined in the communications management plan and distributed to all relevant stakeholders?

- Do work performance reports present forecasts (EAC, schedule forecast) in addition to current status?

- Are variances outside tolerance being analyzed for root cause before corrective action is recommended?

- Are monitoring-driven change requests submitted to the Assess and Implement Changes process with full impact analysis?

- Is the monitoring framework reviewed for measurement pitfalls (vanity metrics, Hawthorne effects, confirmation bias) at least once during project execution?

Conclusion and Next Steps

Monitor and Control Project Performance is the process that keeps the gap between plan and reality visible — and manageable. Project Phoenix’s Sprint 2 velocity deviation, detected at week 4 and corrected by week 7, cost one sprint of schedule adjustment. The same deviation, undetected, would have cost four to six sprints and a formal budget amendment. ProjectAdm’s EAC variance at month 8, detected through earned value analysis and corrected through an infrastructure optimization change request, prevented a budget overrun that would have required co-sponsor intervention and potentially a scope reduction. In both cases, the value of monitoring was not in confirming that the project was on track. It was in detecting, quantifying, and enabling the correction of a deviation before it became irreversible.

Three takeaways for immediate application:

- Metrics must be SMART to be useful: Performance metrics that do not meet the SMART criteria — Specific, Measurable, Achievable, Relevant, and Time-bound — produce monitoring activity without monitoring value. Review your metrics framework. Replace vanity metrics with decision-driving metrics.

- Trend analysis is more valuable than snapshot analysis: A single data point tells you where you are. A trend tells you where you are going. The most actionable monitoring insight is not “CPI is 0.93 today” but “CPI has been declining for four consecutive reporting periods and is on track to breach the 0.90 threshold in two weeks.”

- Monitoring without control is record-keeping: If your monitoring process identifies variances but no corrective actions follow, you are maintaining a history of the project’s problems, not managing them. Define control thresholds. Define response protocols. Make corrective action automatic when thresholds are breached.

Your concrete next step: Open your most recent work performance report. Identify your current CPI and SPI. If either is below 1.0, run a trend analysis for the last four reporting intervals. If the trend is declining, initiate root cause analysis before the next reporting interval. If you do not have a work performance report, that is your first action item: define three SMART metrics for your project, establish a collection and reporting cadence, and produce your first report by next week.

See all PMBOK 8 articles in the Complete Index

🇧🇷 Leia este artigo em português

Call to Action:

References

PMBOK Guide 8: The New Era of Value-Based Project Management. Available at: https://projectmanagement.com.br/pmbok-guide-8/

Disclaimer

This article is an independent educational interpretation of the PMBOK® Guide – Eighth Edition, developed for informational purposes by ProjectManagement.com.br. It does not reproduce or redistribute proprietary PMI content. All trademarks, including PMI, PMBOK, and Project Management Institute, are the property of the Project Management Institute, Inc. For access to the complete and official content, purchase the guide from Amazon or download it for free at https://www.pmi.org/standards/pmbok if you are a PMI member.

Free PMBOK 8 Quick Reference Card

All 7 Performance Domains, 6 Principles, and key tools on one printable page. Download it free — no payment required.

QUIZ

Want to test what you learned from this article?

One multiple-choice question + one practical reflection. Earn Project Together points!

MULTIPLE CHOICE

According to the article, what is the trap of monitoring performance by looking only at numbers without listening to the team?

REFLECTION

To share your reflection, enter your name and email:

You will earn points in the Project Together community!

Your data is protected. No spam.

PRACTICAL REFLECTION

Which part of this article do you plan to apply to generate more value in your projects or daily work?

Your reflection helps other professionals apply the content. Shared reflections are visible below.

✓

PROJECT TOGETHER

Earn points by answering quizzes and sharing reflections. Climb the ranking and earn your certificate!

Join the Course →