Article updated in March 2026 for the PMBOK® Guide — Eighth Edition.

Manage Quality Assurance in PMBOK 8 — Complete Guide

Formerly known as: Manage Quality (PMBOK 6)

The deliverables were technically correct. The code passed all functional tests. The features matched the acceptance criteria in the requirements document. And the client still rejected the project. Not because the product was broken — but because the process used to build it had produced an outcome that no one trusted. Deadlines had been missed without documented explanations. Quality reports had never been produced. The development team had no formal process for peer code reviews. The client’s business representative had been excluded from sprint reviews in months two and three “to save time.” When the final product was delivered, the client had three months of accumulated doubt about whether the right processes had been followed — and no auditable record to reassure them. The project failed a quality assurance test it had never been given.

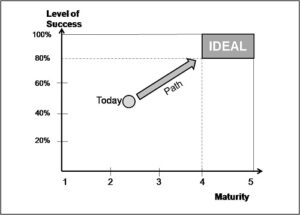

In PMBOK 8, Manage Quality Assurance is Process 5 of the Governance Domain. It is the process that ensures project processes — not just project deliverables — are performed in a manner consistent with stakeholder expectations. It is the difference between a project that accidentally produces good outputs and a project that systematically produces trustworthy outcomes through a governance framework that stakeholders can observe, audit, and rely on.

This complete guide covers everything a project manager or PMP candidate needs to understand, apply, and tailor this process:

- What it is — definition, position in PMBOK 8, and the critical distinction between quality assurance and quality control

- Why use it — direct benefits and the cost of skipping it

- Full ITTO — every input, tool, technique, and output explained

- Step-by-step application guide — from quality planning to audit execution

- When to apply it — triggers and mandatory vs. recommended scenarios

- Two real-world examples — Project Phoenix (website launch) and Project ProjectAdm (SaaS PM platform)

- Templates and tools — with free downloads

- Five common errors — and how to avoid each one

- Tailoring — predictive, agile, and hybrid approaches

- Process interactions — what feeds into quality assurance and what depends on it

- Quick-application checklist — 10 items you can use today

1. What Is the Manage Quality Assurance Process

Manage Quality Assurance is the process of ensuring project processes are performed in a manner consistent with stakeholder expectations. It translates the project management plan — and specifically the quality management plan — into executable activities that incorporate the organization’s standards, regulations, and policies. According to PMBOK 8, its key benefit is that it increases the probability of meeting the project objectives and identifies ineffective processes and the causes of poor project performance.

In PMBOK 8, this is Process 5 of the Governance Domain (Process 5 of 9). Unlike most governance processes that are performed at specific project phases, Manage Quality Assurance is performed continuously throughout the project — from execution through closure.

Quality Assurance vs. Quality Control: the critical distinction

PMBOK 8 explicitly differentiates between quality assurance and quality control as two distinct, complementary efforts:

| Dimension | Quality Assurance | Quality Control |

|---|---|---|

| Focus | Project processes — are we doing things the right way? | Project deliverables — are the outputs meeting defined specifications? |

| Purpose | Assure stakeholders that the project’s processes will produce outputs meeting their expectations | Inspect outputs against specifications and thresholds; identify defects for remediation |

| Related concepts | Regulations, compliance, audits, process improvement | Product design, testing, defects, acceptance criteria |

| Governance process | Manage Quality Assurance (PMBOK 8 Process 5) | Addressed within Scope Management and deliverable verification |

| Timing | Continuous throughout the project | Applied to each deliverable as it is produced |

A team can have perfect quality control (every deliverable passes inspection) and still fail quality assurance (the processes used to produce those deliverables were chaotic, undocumented, and unrepeatable). Quality assurance is about building confidence in the system that produces value — not just in the individual outputs of that system.

What changed from PMBOK 6 to PMBOK 8

| Aspect | PMBOK 6 — Manage Quality | PMBOK 8 — Manage Quality Assurance |

|---|---|---|

| Process name | Manage Quality | Manage Quality Assurance |

| Structural location | Executing Process Group — Quality Management | Governance Domain, Process 5 of 9 |

| Emphasis | Executing quality activities into the project; quality audits and process analysis | Explicitly positioned as process-focused; sharper distinction from quality control which remains in Scope/Delivery domain |

| Governance integration | Quality as a separate management area | Quality assurance integrated into the Governance domain, recognizing that process quality is fundamentally a governance responsibility |

| Adaptive coverage | Limited explicit guidance on agile quality assurance | Explicit guidance: formal in predictive contexts, formal or informal in agile/adaptive contexts based on project context |

2. Why Use the Manage Quality Assurance Process

Direct benefits

According to PMBOK 8, the Manage Quality Assurance process implements planned and systematic activities that achieve three specific outcomes:

- Build confidence that a future output will meet specified requirements: Quality assurance tools such as quality audits and failure analysis provide stakeholders with evidence-based confidence that the processes being used will reliably produce outputs meeting their expectations — before those outputs are completed. This is particularly valuable in long-duration projects where stakeholders cannot wait until the end to know whether the project is on track to deliver the right quality.

- Improve the efficiency and effectiveness of processes and activities: Quality audits identify ineffective processes before they produce defective outputs. Process improvement recommendations generated through quality assurance reduce rework, shorten cycle times, and improve team performance across the entire project lifecycle.

- Ensure proper project governance: Quality assurance creates an auditable, systematic record that the project has been managed according to defined standards and organizational policies. This record is essential for regulatory compliance, client assurance, and internal governance reviews.

The cost of skipping quality assurance

- Process failures become delivery failures: Without quality assurance, process breakdowns (missing peer reviews, inconsistent testing protocols, ad hoc communication patterns) are discovered only when they produce defective outputs — which is the most expensive point in the project at which to discover them.

- Stakeholder trust erodes silently: Stakeholders who cannot observe or audit the processes being used to build their product develop a low-level, persistent anxiety about whether the project is being managed responsibly. This anxiety does not always surface as explicit objections — but it tends to manifest at the final acceptance review as heightened scrutiny, inflated change requests, and reluctance to sign off.

- Compliance risk is unmanaged: In regulated environments (healthcare, financial services, government contracting, data privacy), the absence of documented quality assurance activities is not just a project management weakness — it is a legal and regulatory exposure. Auditors require evidence of controlled processes, not just evidence of good outputs.

- Organizational learning is blocked: Quality audits surface process improvement opportunities that benefit future projects. Organizations that skip quality assurance consistently reproduce the same process inefficiencies across multiple projects, compounding their performance deficit with each new initiative.

3. Inputs, Tools & Techniques, and Outputs (ITTO)

| Inputs | Tools & Techniques | Outputs |

|---|---|---|

|

|

|

Inputs explained

Project management plan (all components): The quality management plan is the most directly relevant component — it defines the quality objectives, quality standards to be applied, quality assurance activities and their schedule, quality metrics, and the roles responsible for quality oversight. However, all plan components are inputs to quality assurance: the scope baseline defines what must be delivered; the schedule baseline defines when; the cost baseline defines how much; the resource management plan defines who. Quality assurance evaluates whether the processes being used to manage all these dimensions are consistent with stakeholder expectations.

Project documents (all components): Quality assurance reviews are performed against the full documentary record of the project’s execution: lessons learned register, risk register, assumption log, issue log, work performance data, and communications. An auditor reviewing a project’s quality assurance posture will examine all of these documents — not just the quality plan.

Organizational process assets — policies, procedures, and regulations: These are the standards against which quality assurance is assessed. Organizational quality policies define the minimum acceptable process standards for any project in the organization. Industry regulations (ISO standards, GDPR compliance procedures, FDA validation requirements, OSHA safety protocols) define the mandatory process requirements that the project must meet. Failure to align execution processes with OPAs exposes the organization to compliance risk, audit findings, and in regulated industries, legal liability.

Tools & Techniques explained

Audits: Structured, independent reviews of project processes to determine whether those processes comply with organizational policies, project management plan commitments, and relevant regulations. A quality audit examines the project’s actual practices — how status is reported, how change requests are processed, how deliverables are reviewed before acceptance, how risks are tracked, how communication is documented — and identifies gaps between actual practice and committed standards. Audits may be conducted by internal quality assurance teams, external auditors, or by the project manager through peer review of their own processes. The audit output is a report of findings and recommendations, not a judgment about deliverable quality.

Checklists: Structured verification tools used to ensure that required quality assurance activities have been performed. Process checklists (was the sprint review conducted? Was the change request assessed for cost and schedule impact? Was the stakeholder communication sent according to the communications management plan?) are different from deliverable checklists (does the feature meet the acceptance criteria?). Both are legitimate tools, but in the context of quality assurance, process checklists are the primary instrument.

Data representation — affinity diagrams, cause-and-effect diagrams, flowcharts: Affinity diagrams organize a large collection of quality findings into logical categories, making patterns visible that would be invisible in an unorganized list. Cause-and-effect diagrams (also known as fishbone or Ishikawa diagrams) are used to identify the root causes of process quality problems: why did the testing process fail to catch this defect? Why were status reports late for three consecutive reporting periods? Flowcharts map the actual sequence of execution processes against the planned sequence, making deviations visible and allowing the team to redesign processes for improved quality and efficiency.

Decision-making: Quality assurance findings often require decisions about whether to continue with the current process, modify it through a change request, or trigger a more fundamental process redesign. Multi-criteria decision analysis, voting, and expert judgment are all valid decision-making approaches in this context. The key principle is that quality assurance decisions should be made based on evidence (audit findings, process data) rather than on intuition or organizational hierarchy.

Problem-solving: When quality audits identify process failures or inefficiencies, the project manager must lead a structured problem-solving effort to address the root cause, not just the symptom. The PDCA (Plan-Do-Check-Act) cycle is a widely used framework for quality problem-solving in project management: define the problem (Plan), implement a corrective process change (Do), measure its effect (Check), and institutionalize the improvement if effective (Act).

Process improvement: The proactive identification and implementation of improvements to project processes before problems occur. Process improvement in quality assurance is not reactive (fixing problems) — it is proactive (identifying opportunities to increase process efficiency and effectiveness). Retrospectives in agile projects, process benchmarking against organizational best practices, and facilitated process review workshops are all process improvement tools.

Outputs explained

Quality reports: Documents that summarize the results of quality assurance activities performed during a reporting period. A quality report typically includes: quality audit results and findings; process compliance status (which planned processes are being followed and which are not); identified process improvement recommendations; quality metrics trends (defect rates, test coverage, review completion rates); and escalations requiring sponsor or stakeholder attention. Quality reports are not deliverable inspection reports — they are process governance documents.

Change requests: Quality assurance findings that reveal process gaps, non-compliance with standards, or opportunities for significant process improvement typically generate change requests. A quality audit that finds that peer code reviews are not being conducted according to the quality management plan will generate a change request to either reinstate the process or formally adjust the plan to reflect a different acceptable standard. Quality-driven change requests flow to the Assess and Implement Changes process for evaluation and decision.

4. How to Apply the Process Step by Step

Step 1 — Define quality assurance activities in the quality management plan

Before execution begins, the quality management plan must define which quality assurance activities will be performed, on what schedule, by whom, and against what standards. At minimum, this should include: the frequency and scope of quality audits; the checklists that will be used for process verification; the quality metrics that will be tracked (defect rates, review completion rates, compliance checkpoints); and the reporting format and cadence for quality reports. A quality management plan that defines only quality control activities (deliverable inspection) but not quality assurance activities (process verification) is incomplete.

Step 2 — Conduct quality audits at defined intervals

Execute the planned quality audits at the intervals defined in the quality management plan. For each audit, review the relevant project documents (change log, issue log, communications register, status reports) and interview the PM and team members to assess actual process compliance. Document every finding: process gaps, non-compliance instances, and positive findings (processes being performed better than planned). Quality audits should be constructive — their purpose is process improvement, not fault attribution.

Step 3 — Analyze findings with data representation tools

After each audit, use cause-and-effect diagrams to understand the root cause of identified process failures. Use affinity diagrams to categorize multiple findings into themes (communication processes, change management, quality control, resource management) and identify which process category generates the most quality risks. Use flowcharts to map actual processes against planned processes and identify where deviations are occurring.

Step 4 — Generate quality reports

Produce quality reports at the frequency defined in the communications management plan. Reports should present findings objectively, including both compliance successes and gaps. All recommendations for process improvement should be specific and actionable — not generic observations. Quality reports are distributed to the sponsor, the project management team, and any quality oversight stakeholders.

Step 5 — Submit change requests for process improvements

For every quality audit finding that requires a process change, generate a formal change request through the Assess and Implement Changes process. This ensures that process improvements are evaluated for their impact on schedule, cost, and scope before being implemented — and that the improved process becomes part of the documented, approved project management approach.

5. When to Apply the Process

Mandatory scenarios

- Throughout project execution: Manage Quality Assurance is continuous. It does not have a defined start and end point within the execution phase — it is active as long as project processes are being performed.

- Before phase gates and milestone reviews: Quality assurance findings are essential inputs to phase gate reviews. The organization’s decision to authorize the next phase should include an assessment of whether the processes used in the current phase met the quality standards defined in the project management plan.

- When regulatory or contractual quality standards apply: In regulated industries, quality assurance is not optional — it is a contractual or legal requirement. Evidence of quality assurance activities (audit records, quality reports) must be produced and archived as project documentation.

Recommended scenarios

- After significant team changes: When key team members join or leave during execution, the processes that were operating effectively may degrade due to knowledge loss. A targeted quality audit after a significant team change ensures that process continuity is maintained.

- After a significant scope change: Large scope changes often require changes to execution processes. Quality assurance after a scope change confirms that the processes have been updated to match the new scope and that the team is applying the updated processes consistently.

6. Practical Examples

Example 1 — Website Launch: Project Phoenix

Context: Alex Morgan, PMP, is managing Project Phoenix — a 90-day website redesign and CRM integration for TechCorp, led by CEO Sarah Chen. Budget: $72,250. Approach: agile with 2-week sprints.

How Manage Quality Assurance was applied:

Alex included three quality assurance activities in the quality management plan at initiation: (1) a mid-project quality audit at Sprint 5 to assess process compliance; (2) sprint review compliance tracking (was the sprint review conducted on schedule with the required attendees?); and (3) a change request process compliance check (were all scope changes going through the formal change request channel or being implemented informally?).

At Sprint 5, Alex conducted the planned quality audit. Three findings emerged: first, two sprint reviews had occurred without the client’s marketing representative (the defined product owner proxy), reducing the feedback loop quality; second, one change had been implemented by a developer without a formal change request, bypassing the agreed process; third, the requirements traceability matrix had not been updated after Sprint 3’s scope adjustment, creating a gap in traceability documentation.

Alex produced a quality report with three specific corrective action change requests: mandatory product owner attendance at sprint reviews (or a designated proxy), a team-wide reminder of the change request protocol with consequences for bypassing it, and a one-time requirements traceability matrix update assigned to the PM for completion before Sprint 6. Sarah Chen reviewed and approved all three change requests in the next steering committee call. The quality report became part of the project’s official record and was referenced when the client signed the project acceptance document at completion.

Example 2 — SaaS PM Platform: Project ProjectAdm

Context: Eduardo Montes leads ProjectAdm development — a SaaS PM platform. Team: 8 developers + 2 designers + 1 QA. Duration: 18 months. Hybrid approach with formal compliance milestones and agile sprint cycles.

How Manage Quality Assurance was applied:

For ProjectAdm, quality assurance operated on two tracks matching the hybrid project approach. On the predictive track, formal quality audits were conducted at each major compliance milestone: before the GDPR compliance submission, before the AWS multi-region deployment go-live, and before the public launch. Each audit reviewed process compliance against the quality management plan, the compliance requirements checklist, and the infrastructure standards defined in the sourcing strategy plan.

The GDPR compliance quality audit at month 10 identified that the privacy policy update process — which required review by the external legal counsel before being published — had not been followed for a routine wording change made three weeks earlier. The change had been made directly to the live production policy text without legal review. The audit finding generated an immediate change request to revert the wording to its last legally-reviewed version and to implement a mandatory approval gate in the content management system that would prevent any privacy policy changes from being published without documented legal sign-off. The change was implemented before the formal GDPR compliance submission was made. The auditor reviewing the compliance submission noted the process control as a positive finding.

On the agile track, quality assurance was embedded in the sprint retrospective format: each retrospective included a structured 20-minute process review segment asking “Did we follow our definition of done? Were there any process shortcuts taken that we need to address?” This lightweight, iterative quality assurance approach surfaced two process improvement opportunities in the first six sprints: the definition of done needed an explicit browser compatibility testing step, and the design review process needed a minimum turnaround time commitment to prevent design feedback from blocking sprint completions.

7. Free and Recommended Templates

| Document | Free blank template |

|---|---|

| Quality Management Plan Quality objectives, standards, assurance activities, metrics, reporting cadence |

Download free template |

| Quality Audit Checklist Process compliance verification for each major project management process area |

Download free template |

Recommended digital tools

- Confluence / Notion: For maintaining quality management plans, audit reports, and quality checklists as versioned, collaborative documents.

- Jira / Azure DevOps: For tracking process compliance metrics (sprint review attendance, change request throughput, definition of done completion rates) within the project management workflow.

- Google Workspace / Microsoft 365: For structured quality audit reports and quality report distribution to stakeholders.

- Miro / Mural: For facilitating cause-and-effect analysis and affinity diagramming sessions with distributed teams.

8. Five Common Errors — and How to Avoid Each One

Error 1 — Confusing quality assurance with quality control

Why it happens: The terms are used interchangeably in casual project management conversation and in many organizational quality frameworks that predate PMBOK 8. The result is that projects invest heavily in deliverable inspection (quality control) while having no systematic process for verifying that the processes used to build those deliverables meet stakeholder expectations (quality assurance).

How to avoid it: Document both quality assurance activities (process audits, compliance checks) and quality control activities (deliverable inspections, testing protocols) explicitly in the quality management plan. Assign different owners if the project scale warrants it. Review the quality management plan to confirm that both dimensions are covered before execution begins.

Error 2 — Scheduling quality audits only at project closure

Why it happens: Quality audits are perceived as bureaucratic retrospectives that happen at the end of the project. This perception misses their entire value proposition: quality audits are most valuable when performed during execution, when their findings can drive process improvements that benefit the remaining work.

How to avoid it: Schedule quality audits at defined intervals throughout execution — at least at each phase boundary, but ideally at regular intervals within each phase (every 4–6 weeks on a 6-month project). An audit conducted in week 8 of a 6-month project can drive process improvements that benefit 4 months of remaining execution. An audit conducted in week 23 of the same project drives improvements for 1 month.

Error 3 — Quality reports that describe problems without actionable recommendations

Why it happens: The quality audit finds problems, the quality report describes them, and the report is filed. No one acts on the findings because the findings are observations rather than recommendations, and the observations have no assigned owners or resolution timelines.

How to avoid it: Every quality report finding must include a specific, actionable recommendation: what change is required, who is responsible for implementing it, by what date, and what will be measured to confirm that the improvement has been achieved. Quality reports without actionable recommendations are historical documents; quality reports with actionable recommendations are governance instruments.

Error 4 — Quality assurance performed only by the project manager

Why it happens: Quality assurance is perceived as a PM responsibility that does not require team involvement. The PM reviews the process, writes the report, and considers the obligation fulfilled.

How to avoid it: PMBOK 8 notes that quality assurance tasks may be performed by the project management team, an external entity, or both. In practice, the most effective quality assurance combines the PM’s governance perspective with the team’s operational knowledge. Include quality assurance as a standing agenda item in team retrospectives. In high-stakes projects, engage an independent quality assurance reviewer (internal quality team or external auditor) to bring an objective perspective that the embedded PM cannot provide.

Error 5 — Not updating the quality management plan when processes change

Why it happens: During execution, processes evolve organically in response to practical realities — teams adopt new tools, adjust their review cadence, or change their communication protocols. The quality management plan is not updated to reflect these changes, creating a growing gap between the documented process and the actual process.

How to avoid it: Treat the quality management plan as a living document. Every significant process change — whether driven by a quality audit finding, a team preference, or a new tool adoption — should trigger a formal update to the quality management plan through the Assess and Implement Changes process. A quality management plan that accurately documents the project’s actual processes is a governance asset; one that documents an outdated ideal is a compliance liability.

9. Tailoring: Predictive, Agile, and Hybrid

PMBOK 8 explicitly states that in agile or adaptive projects, quality assurance can be performed formally or informally, based on context. In predictive projects, quality assurance is usually performed in a formal capacity, under the expectation that strong process quality will minimize variances in an otherwise stable project.

Predictive approach

- Formal quality audits: Conducted at defined intervals by a designated quality assurance authority (internal QA team, project sponsor’s representative, or external auditor). Findings documented in formal quality reports distributed to the sponsor and governance committee.

- Process compliance dashboards: Metrics tracking compliance with defined processes across all management areas, reported at each steering committee meeting.

- Regulatory alignment: In regulated environments, quality assurance activities are designed specifically to generate the audit trail required by the applicable regulatory framework (GMP, ISO 9001, GDPR, etc.).

Agile approach

- Retrospectives as quality assurance: Sprint retrospectives serve as the primary quality assurance mechanism — structured reviews of whether the team’s processes are working effectively, with specific improvement actions committed for the next sprint.

- Definition of done as quality standard: The definition of done is a quality assurance instrument — it defines the process requirements that every increment must satisfy before being considered complete. Monitoring definition of done compliance across sprints is a lightweight quality assurance practice.

- Informal but intentional: Quality assurance is less formal in agile contexts, but it is not absent. The retrospective, the definition of done review, and the sprint review attendance tracking are all quality assurance activities — they are performed systematically, even if not documented in formal quality reports.

Hybrid approach (ProjectAdm model)

- Dual-track quality assurance: Formal audits at compliance milestones (predictive track); retrospective-based process improvement at sprint boundaries (agile track).

- Integrated quality reporting: A unified quality report that covers both tracks, presented at the monthly steering committee meeting.

10. Interactions with Other Processes and Domains

| Process | Domain | Relationship |

|---|---|---|

| Integrate and Align Project Plans (Process 2) | Governance | The quality management plan, integrated into the overall project management plan, is the primary input for all quality assurance activities |

| Manage Project Execution (Process 4) | Governance | Quality assurance audits evaluate the processes being used during execution; execution generates the data that quality assurance analyzes |

| Assess and Implement Changes (Process 8) | Governance | Change requests generated by quality audit findings flow to Assess and Implement Changes for evaluation and approval |

| Monitor and Control Project Performance (Process 7) | Governance | Quality reports produced by quality assurance are inputs to performance monitoring; performance monitoring data feeds quality assurance analysis |

| Quality Control (Scope/Delivery domain) | Scope | Quality assurance audits may identify systemic quality control failures; quality control defect data informs quality assurance process improvement analysis |

11. Quick-Application Checklist

- Does the quality management plan explicitly define quality assurance activities (process audits, checklists) separate from quality control activities (deliverable inspections)?

- Are quality audits scheduled at regular intervals throughout execution, not only at project closure?

- Is there a defined process compliance checklist for each major management area (change management, risk management, communication, issue management)?

- Are quality reports produced after each audit and distributed to the sponsor and relevant stakeholders?

- Does every quality report finding include a specific, actionable recommendation with a named owner and target resolution date?

- Are quality audit findings that require process changes submitted as formal change requests through the Assess and Implement Changes process?

- In agile contexts, does each sprint retrospective include a structured process quality review segment?

- Is the quality management plan updated when execution processes evolve, ensuring it accurately documents actual practices?

- Are the organizational quality standards, regulations, and policies that apply to this project documented in the quality management plan and referenced in quality audits?

- Is the quality assurance function visible to key stakeholders — do they have access to quality reports and understand the process compliance status of the project?

Conclusion and Next Steps

Manage Quality Assurance is the governance process that transforms good intentions into trustworthy evidence. Project Phoenix’s quality audit at Sprint 5 caught three process compliance gaps before they could compromise the client relationship. ProjectAdm’s GDPR audit at month 10 caught a privacy policy process failure before it became a compliance violation. In both cases, the value of quality assurance was not in confirming that everything was perfect — it was in identifying specific, correctable gaps before they became irreversible problems.

Three takeaways for immediate application:

- Quality assurance is about processes, not products: If your quality management plan only describes how deliverables will be inspected, it is a quality control plan. A quality assurance plan describes how you will verify that the processes used to create those deliverables meet stakeholder expectations. Both are necessary. Neither is sufficient alone.

- Quality audits during execution are worth ten times more than audits at closure: An audit finding in week 8 can be corrected in a week and benefit four months of remaining execution. An audit finding in the final week can only inform the lessons learned register. Schedule audits early and often.

- Quality reports without actionable recommendations are documents without governance value: Every finding must become a specific recommendation, with a named owner and a target date. Otherwise, the audit is an observation exercise, not a governance instrument.

Your concrete next step: Open your quality management plan. Locate the quality assurance section. Check: are there scheduled process audits? Are there checklists for process compliance? When was the last quality report produced and distributed? If any of these questions produces a “no,” you have identified a governance gap. Address it before the next phase review — because the cost of a process compliance finding from your client or auditor is many times greater than the cost of finding and fixing it yourself.

See all PMBOK 8 articles in the Complete Index

🇧🇷 Leia este artigo em português

Call to Action:

References

PMBOK Guide 8: The New Era of Value-Based Project Management. Available at: https://projectmanagement.com.br/pmbok-guide-8/

Disclaimer

This article is an independent educational interpretation of the PMBOK® Guide – Eighth Edition, developed for informational purposes by ProjectManagement.com.br. It does not reproduce or redistribute proprietary PMI content. All trademarks, including PMI, PMBOK, and Project Management Institute, are the property of the Project Management Institute, Inc. For access to the complete and official content, purchase the guide from Amazon or download it for free at https://www.pmi.org/standards/pmbok if you are a PMI member.

Free PMBOK 8 Quick Reference Card

All 7 Performance Domains, 6 Principles, and key tools on one printable page. Download it free — no payment required.

Project Together

Track your progress through PMBOK 8

Create your free account to save your reading progress, earn points on every quiz, and unlock certificates as you master each domain.

Create Free Account →Free forever • No credit card required

QUIZ

Want to test what you learned from this article?

One multiple-choice question + one practical reflection. Earn Project Together points!

MULTIPLE CHOICE

According to the article, what is the most powerful insight about quality: inspecting defects or building quality from the start?

REFLECTION

To share your reflection, enter your name and email:

You will earn points in the Project Together community!

Your data is protected. No spam.

PRACTICAL REFLECTION

Which part of this article do you plan to apply to generate more value in your projects or daily work?

Your reflection helps other professionals apply the content. Shared reflections are visible below.

✓

PROJECT TOGETHER

Earn points by answering quizzes and sharing reflections. Climb the ranking and earn your certificate!

Join the Course →